Service Codex

I recently picked up the Cocktail Codex. The book outlines how there are only six families of cocktails: the old-fashioned, martini, daiquiri, sidecar, whisky highball, and flip. This got me thinking - are there similar templates for services?

Segment was an early adopter of microservices, and Stripe also uses a service oriented architecture. The majority of our workloads follow these patterns: RPC, workers and tasks.

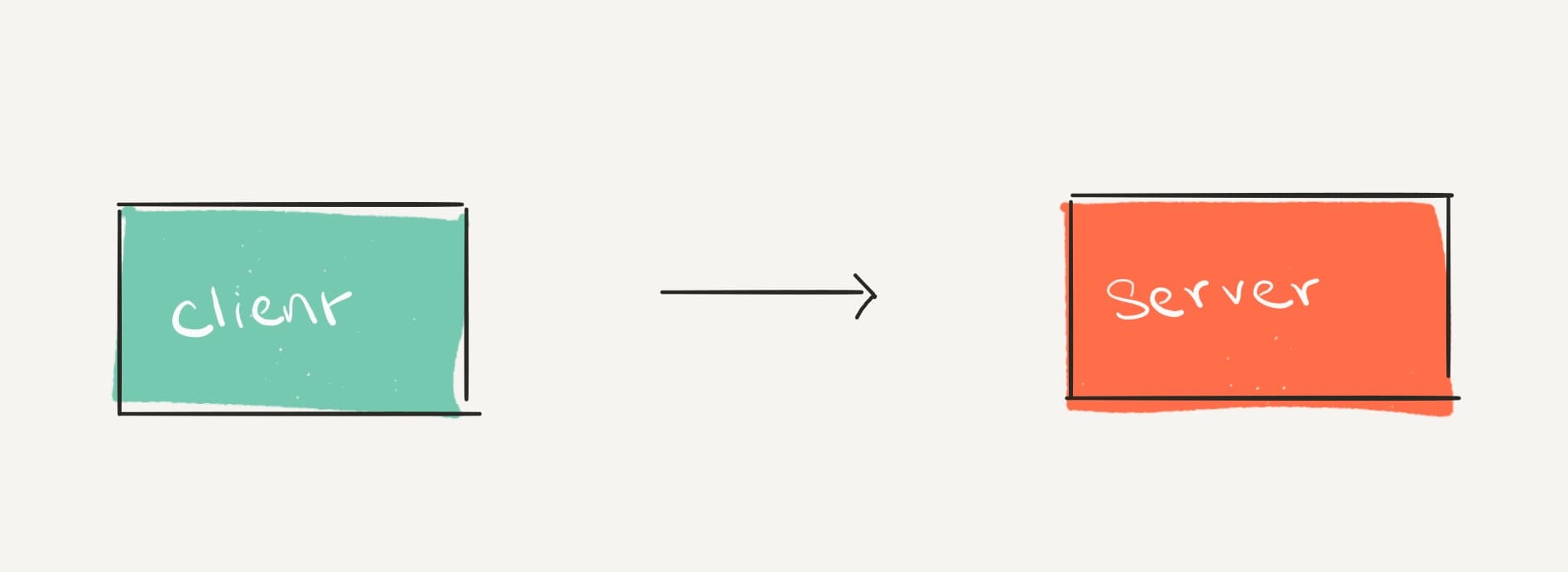

RPC

In Remote Procedure Call (RPC) services, requests are initiated by a client, then the server must fulfill them. Clients and servers must agree on a protocol to communicate either through HTTP, the most popular protocol, or such others as gRPC.

These systems are characterized by the synchronous nature of the request-response lifecycle between client and server, which requires keeping an open connection. This consumes resources, so RPC is suited to workloads that complete within a few seconds.

Throughput is largely determined by the number of requests from the client, so it's important to ensure that servers are scaled up to handle the load, which incurs additional cost, and that clients are robust enough to handle downtime, which incurs additional complexity.

The service level indicator (SLI) of a RPC server is measured by the relative frequency of response codes, for example, percentage of non-5xx responses.

SLI = Good Responses * 100 / Valid Requests

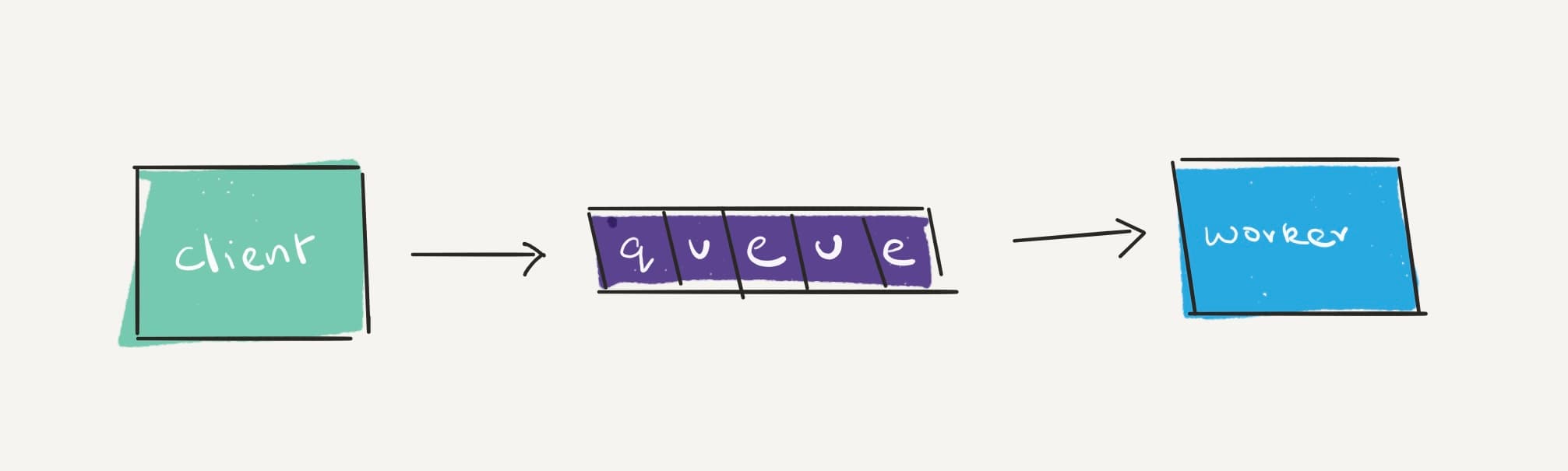

Workers

Clients (also called producers) write their requests to a queue, and workers (also called consumers) consume messages from this queue.

These systems are characterized by their asynchronous communications, which suits them for workloads which are longer to complete -- more than a few seconds, but less than a few minutes.

Queues are critical components of these systems, as they help implement asynchronous communication between clients and servers. They may provide durability, ordering, batching, priority and other guarantees.

Queues are the magic ingredient that allow workers to scale better than RPC services. Adding capacity to a queue to handle a traffic spike is often easier, and significantly cheaper than deploying more services.

The SLI of a worker can typically be measured by the queue backlog - such as the number of pending items in the queue, or the age of the oldest item in the queue.

SLI = Pending Items in Queue

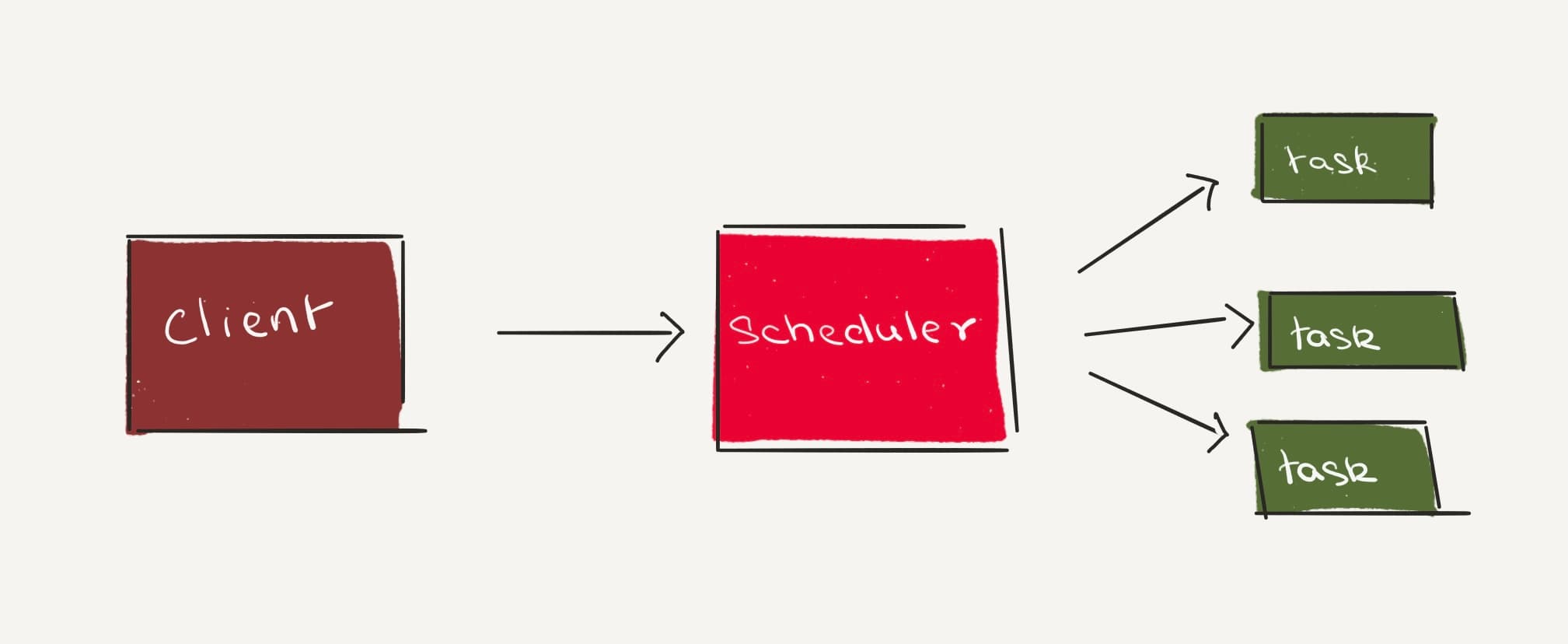

Tasks

Tasks represent a finite execution unit of some work.

Unlike workers and RPC servers -- which are constantly running and waiting for work -- tasks are lazy. They’re invoked only when there’s work to be done.

A scheduler determines when tasks need to be launched. For instance, a cron scheduler will arrange tasks to be performed at a given interval.

Tasks differ from workers in that they are executed in isolation from other tasks, which makes them suited to workloads with execution times of more than a few minutes, and often hours.

As tasks get more complex, they'll be decomposed into smaller tasks, and execution is managed by an orchestration framework such as Airflow or Step Functions. This allows for recovering from failures efficiently.

The SLI of tasks can be measured by their exit codes.

SLI = Good Exit Codes * 100 / Valid Invocations

Infinite Possibilities

Unlike cocktails, these blueprints are composable. For instance, workers will add a RPC service to accept requests. This allows them to hide the queue from clients, and signal synchronous errors back to the client. Similarly, many tasks will add a RPC service to allow clients to monitor execution of tasks. While this list is not exhaustive, combining these blueprints will allow you to start small, and maybe build the next Segment or Stripe!